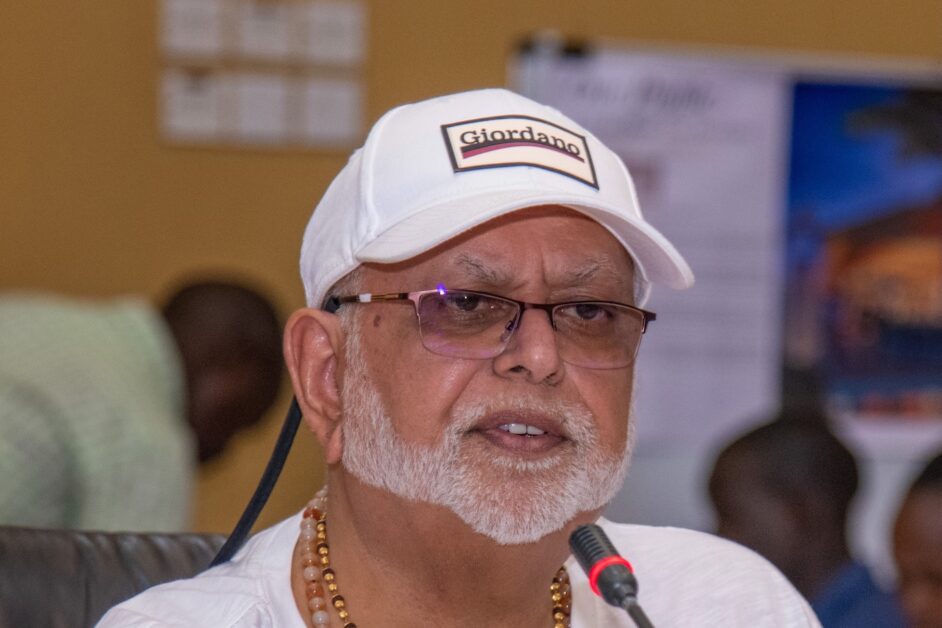

This week, Ugandans were confronted with a chilling reminder that AI-driven fraud is no longer a distant threat—it’s here. A deepfake video began circulating online, falsely portraying billionaire businessman Dr. Sudhir Ruparelia endorsing a fraudulent investment platform. Using AI-generated voice and manipulated footage from a legitimate interview, the scam claimed investors could earn UGX 10 million per month from a UGX 915,000 stake, falsely suggesting government interference and censorship to add credibility. Dr. Sudhir quickly distanced himself, warning: “Please be careful, it is a scam.”

The incident sent shockwaves across Uganda’s business and tech communities. But it also echoed a growing global concern: AI is now weaponized by cybercriminals, creating scams so realistic they’re fooling even the most cautious individuals and organizations.

This article draws on the latest research and expert analysis from:

- The PwC Global Economic Crime and Fraud Survey 2024 (Uganda & Nigeria Reports)

- PwC’s East Africa CEO Surveys (2024 & 2025)

- The Deloitte 2024 AI and Cybercrime Risk Brief

- TransUnion’s Global Fraud Trends 2024

- Research from the World Economic Forum (WEF) and MIT Media Lab on deepfakes

- Real-world cases reported by FinCEN, UK authorities, and global cybersecurity experts.

From cloned voices and deepfake impersonations to AI-generated phishing attacks and synthetic identities, the nature of fraud is evolving rapidly, and no one is immune.

In this guide, we outline 10 actionable ways you—whether a business leader, IT professional, or everyday digital citizen—can spot, avoid, and defend yourself against the rising threat of AI-assisted fraud and cyber scams.

1. Be Skeptical of Unsolicited Content and Requests

Scammers often use AI to create fake but convincing messages featuring trusted figures or institutions. Always pause and scrutinize unsolicited videos, emails, or social media posts – especially those pushing financial schemes or urgent requests. If a famous billionaire suddenly appears in a video urging you to invest, or a “bank official” emails about a limited-time offer, assume it could be a fraud until verified. Recently, in the US, deepfake videos of Elon Musk promoting crypto scams have duped victims into losing hundreds of thousands of dollars. The rule of thumb: if it’s sensational, unsolicited, or too good to be true, be sceptical. Cross-check through official channels and don’t let the shock factor override your caution.

2. Verify Identities Through Secondary Channels

Always verify requests directly using a second method of communication, even when a message or call seems legitimate. AI can clone voices and videos, so a phone call from your CEO or a WhatsApp message from a friend might be an imposter. If you get an urgent request (for money transfer, sensitive data, or clicking a login link), don’t act immediately – instead, call the person on a known number or speak face-to-face if possible. Many companies have fallen victim to fraud by not double-checking.

In early 2024, an employee at a British firm was tricked into transferring $25 million after deepfake attackers impersonated her CFO and colleagues on a video call. Everything looked and sounded real, so she made 15 bank transfers – only later did she learn the executives on the call were AI-generated imposters. Such “verification attacks” prey on our trust. To guard against them, implement call-back protocols: for instance, if a “manager” emails to change a payment account, verify by calling them. No legitimate superior or organization will fault you for taking a moment to confirm a request’s authenticity – in fact, reputable firms encourage it as a security step.

3. Educate Yourself and Your Team on AI-enhanced phishing

Phishing emails and texts remain a primary way criminals infiltrate individuals and organizations, and now AI makes them more deceptive. In the past, you might spot a scam by its poor grammar or generic text. Today, fraudsters use generative AI tools (some even dubiously nicknamed “FraudGPT”) to craft phishing messages that are polished, contextually tailored, and even personalized at scale. This means a phishing email may look linguistically perfect and refer to details scraped from your social media or company website. Don’t rely on spelling mistakes as your cue. Instead, train yourself and your employees to look for other red flags: mismatched email addresses, unusual tone or timing, or requests that skip normal procedures. For example, if a vendor’s email asks for payment to a new account, confirm via phone. Regular security awareness training is crucial – simulate phishing drills and keep everyone updated about the latest AI-aided scams.

Remember, phishing isn’t just email anymore: scammers may use AI chatbots on websites or fake “customer service” chats to trick you. Staying educated on these evolving tactics is one of the best defences.

4. Recognize Deepfake Red Flags in Media

Deepfake imagery often has subtle errors – for instance, inconsistent reflections in the eyes are a known tell-tale sign. Training yourself to spot such anomalies can help in catching a fake video or image. AI-generated fake videos or audio – deepfakes – can be very convincing, but they’re not always perfect. There are often glitches or inconsistencies that give them away if you know what to look for. One common tip from MIT researchers is to watch the eyes and facial movements: is the person blinking naturally and at a normal rate? Does the lip movement truly sync with the spoken words?

Deepfake videos sometimes have odd eye movements (or none at all) and slight lip-sync issues. Also, check the lighting and reflections – for instance, a real person’s eyes will have the same light reflection in both pupils, whereas deepfake images often fail this test. In the image above, researchers show how mismatched reflections (highlighted in circles) can signal a fake. Other red flags include imperfect skin or hair (e.g., a strangely ageless face with older-looking eyes), or an audio clip that has unnatural cadence or odd background artifacts. If you see a viral video of a public figure doing something bizarre, toggle your “deepfake radar” on – search for corroborating news from trusted outlets. For important communications (like a video conference from a partner or boss), don’t hesitate to ask questions or request a follow-up in person. Deepfakes are getting better, but a healthy dose of scrutiny can still reveal many of them.

5. Strengthen Authentication and Approval Processes

Enhance your defences by making it harder for fraudsters to succeed even if they do manage to deceive. Both individuals and organizations should use strong authentication and multi-step verification, especially for high-value transactions or account access. Start with multi-factor authentication (MFA) on all important accounts – this means even if an AI-assisted hacker guesses or phishes your password, they still need that second factor (like your phone confirmation) which is much harder to get. Many AI scams involve tricking someone inside a company to authorize something illegitimate. To counter this, companies should implement rigorous approval processes: for example, require two authorized people to sign off on large fund transfers and mandate a verbal or in-person confirmation for requests that come via email.

In sectors with weaker controls, fraudsters thrive – PwC observed a rise in invoice and payment fraud in organizations with “nascent finance functions” in Africa. A robust internal process can stop a fake CEO or supplier scam in its tracks because the AI impostor won’t easily navigate a multilayer verification routine. Consider adopting a “zero trust” policy internally: trust no request fully, especially those involving money or sensitive data, until it’s verified through an independent path. These measures create speed bumps that can delay or deter criminals, buying time to detect the fraud before it’s too late.

6. Guard Your Personal Data and Digital Footprint

Be mindful of the personal information you and your organization share publicly – data is the fuel for AI-driven fraud. Scammers gather tidbits from social media, data breaches, and online searches to craft convincing lies. For instance, if your LinkedIn profile shows your job and your company’s CFO’s name, a fraudster could target you with a tailored email impersonating that CFO. Limit what you post, and review privacy settings so strangers can’t easily scrape your contacts, photos, or resume. Synthetic identity fraud, where criminals combine real and fake info to create a new identity, is skyrocketing thanks to AI.

In fact, TransUnion reports that synthetic ID fraud is the fastest-growing type of fraud in 2024 – and warns that AI makes it “much easier and faster to create completely realistic-looking, fabricated identities” with rich digital backstories. This could mean a scammer uses your stolen ID numbers or documents in combination with a fake name and AI-generated face to open bank accounts or defraud others. Protect yourself by safeguarding sensitive data like ID numbers, and monitor your financial statements and credit reports for any unfamiliar accounts or charges. Organizations, on the other hand, should tighten customer onboarding checks – for example, use document verification and even AI-based face-match or liveness tests to ensure a new client or employee is real. By reducing the data you leak and quickly catching misuse of your identity, you make it much harder for AI-fueled fraudsters to succeed.

7. Use Advanced Tools – Leverage AI for Defense

Fighting AI with AI is becoming an essential strategy in cybersecurity. Just as criminals have new tools, so do defenders. Next-gen security software can detect anomalies that humans might miss. For example, email security gateways now use machine learning to flag phishing attempts by analyzing subtle aspects of writing style and context. Anti-fraud systems at banks use AI to spot unusual transaction patterns in real time and stop fraudulent wire transfers. Consider investing in solutions that include deepfake detection capabilities – these can analyze video or audio to find signs of manipulation (blurriness in facial edges, robotic tones, etc.).

In the identity verification industry, “liveness detection” is being used to thwart deepfakes: the system checks if a selfie is from a live person or an AI-generated image. Keep your software and defences updated as well: attackers may employ AI to find and exploit any unpatched vulnerability faster than ever. Notably, security researchers have seen AI models produce polymorphic malware – malicious code that constantly changes its signature to evade antivirus programs. To counter this, ensure you’re using updated anti-malware tools that can detect behaviour anomalies, not just known virus signatures.

Businesses should also be aware of attacks on AI systems themselves – for instance, hackers might use “prompt injection” or feed bad data to confuse your company’s AI models. Make sure any AI tools you deploy (like chatbots or fraud detectors) are monitored and have safeguards. In short, stay technologically one step ahead: deploy the latest defensive tech and consider consulting cybersecurity experts who understand AI threats. The cost of prevention is far less than the cost of a major breach or scam.

8. Foster a Security-Aware Culture and Clear Protocols

Technology alone isn’t enough – people are the first line of defence. Cultivate a culture of security in your organization (and even within your family at home). This means regularly discussing new scam techniques, encouraging everyone to double-check strange requests, and making cybersecurity “everyone’s job.” Leadership should set the tone: executives and IT professionals must communicate the importance of these threats in plain language. In many places, digital adoption has outpaced cybersecurity awareness, creating a dangerous gap. Closing that gap involves continuous training and empowerment.

Employees should feel safe to report a suspected phishing email or to question a directive that seems off, without fear of reprimand for “slowing things down.” Having clear protocols in place is key – for example, a documented incident response plan for fraud. If a staff member suspects a deepfake call or a phishing attack, they should know exactly how to escalate it and who to inform. Simulate scenarios: what should an employee do if they receive a call from “IT support” asking for a password? What if the media department finds a fake video of your CEO circulating online? Running through these drills can make the real event much less chaotic. As one African cybersecurity leader put it, educating people to adopt a “zero-trust mindset” about online content is a powerful deterrent to deepfake scams. In practice, that means instilling a healthy scepticism and verification habit at all levels.

Security is a shared responsibility – when everyone from the CEO to the newest intern knows the playbook for spotting and stopping scams, your human firewall becomes strong.

9. Stay Informed on Emerging Scam Tactics

The threat landscape is evolving rapidly, so make a habit of staying up-to-date with the latest fraud trends. Today it might be deepfake videos; tomorrow it could be AI-driven voice assistants making scam calls en masse. Subscribe to reputable cybersecurity newsletters, follow alerts from authorities, and share updates within your network. For instance, in 2024 the U.S. Treasury’s Financial Crimes Enforcement Network (FinCEN) issued an alert to financial institutions specifically about fraud schemes using deepfake media. Such reports often detail the red flags and patterns to watch for – extremely useful information to incorporate into your defences. Industry reports and surveys can also reveal how widespread certain scams are becoming.

One survey in late 2024 found that just over half of businesses in the US and UK had been targeted by deepfake-powered scams, and 43% actually fell victim. Statistics like that serve as a wake-up call; they underscore that these aren’t one-off anecdotes but a common threat. By keeping informed, you won’t be caught off guard by new developments – whether it’s a spike in AI-generated fake invoices, or a new form of synthetic ID fraud hitting banks. Knowledge is power: consider it an early warning system that lets you adapt your fraud prevention measures proactively. Attending webinars, industry conferences, or even community IT security meetups can also provide valuable insights (and often, real case studies) about what’s coming next. The more you know about the enemy’s playbook, the better you can preempt it.

10. Adopt a “Zero-Trust” Mindset Online

Finally, approach your digital interactions with a “zero-trust” mindset – in essence, verify everything. This doesn’t mean being paranoid or refusing to use technology; it means maintaining a healthy level of suspicion about anything unsolicited and requiring proof or authentication for claims. If you receive an email with an attachment, don’t assume it’s safe – scan it and confirm the sender. If you see a viral social media post about a giveaway, don’t assume it’s true – check the official source. Especially in the era of AI, seeing (or hearing) is not always believing. Treat every surprising piece of online content as guilty until proven innocent. This mindset is echoed by cybersecurity experts worldwide as a key principle for digital safety.

For organizations, “zero trust” is also a technical framework (never trust, always verify) that can be adopted for network access, but here we mean it more generally: don’t inherently trust digital content just because it looks real. Train yourself to ask: “Could this be fake? Could someone be impersonating or manipulating here?” Then verify via independent channels. By adopting zero-trust as a personal habit, you’ll naturally implement many of the tips above in daily life. You’ll double-check identities, scrutinize media, and question odd requests as second nature. That is exactly the kind of cautious behaviour that foils AI-assisted fraudsters. In an age when criminals can digitally mimic voices, faces, and documents, trust must be earned, not given.

Conclusion

Artificial intelligence is transforming our world, and while it brings incredible innovations, it’s also supercharging the dark art of fraud. Scams that leverage AI – whether through deepfake videos, synthetic identities, or intelligent malware – are more convincing and scalable than traditional tactics. Both individuals and organizations therefore need to elevate their vigilance. By applying the ten strategies above, you build layered defences: technological, procedural, and human. We’ve seen that awareness and scepticism can stop a multimillion-dollar deepfake heist in its tracks and that a commitment to verification and education can immunize a workforce against even the cleverest phishing email.

As one CIO noted, the number and sophistication of attacks have been rising sharply in recent months– a trend echoed across Africa and the globe. The escalation of AI-assisted cybercrime is a reality, but it doesn’t have to be a losing battle. Armed with the right knowledge and tools, you can spot the tell-tale signs of fraud early and guard yourself and your organization effectively. In this new era of intelligent threats, a combination of human caution and AI-powered defences will be our best armour. Stay informed, stay alert, and remember: trust, but verify – especially when AI is involved.

Concern for the Girl Child (CGC) at 25: New Board, Renewed Mission for the Girl Child

Concern for the Girl Child (CGC) at 25: New Board, Renewed Mission for the Girl Child